big.LITTLE: ARM's Strategy For Efficient Computing

ARM vs. Intel: Who Has the Superior Solution?

To date, Intel has eschewed a big.LITTLE approach in favor of DVFS -- Dynamic Voltage and Frequency Scaling. As the name implies, Intel uses DVFS to reduce power consumption by dropping the CPU into the lowest possible power state, transitioning out of that state when needed, and returning to it when the need for additional performance has dropped off again. One of the problems with comparing the two approaches is that "performance," in this case, refers to task completion time, total power consumed during the completion of that task, and how effectively the operating system manages the power conservation features of the CPU.

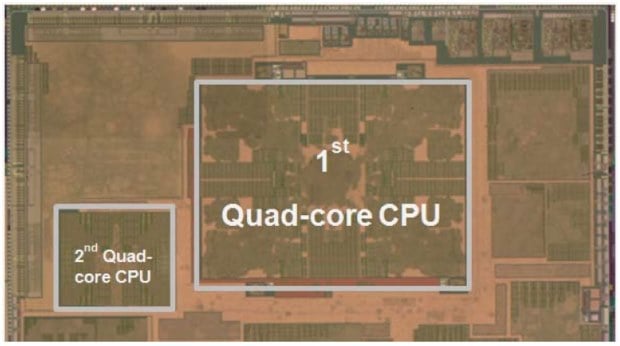

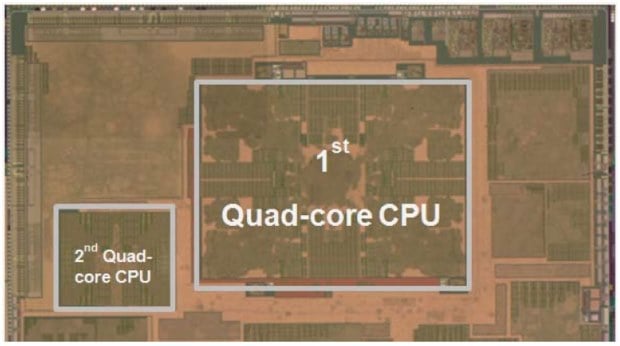

Samsung's Exynos Octa was supposed to be big.LITTLE's major debut, but all available evidence suggests that the CPU's implementation is broken. That would explain why Samsung's flagship, the Galaxy S4, recently launched with a Qualcomm processor inside the US version, with the much-touted Exynos 5 Octa relegated to the international versions of the phone and the Korean model. Reports indicate that the CCI-400 (Cache Coherent Interconnect) module that makes big.LITTLE possible is disabled on the device and can't be enabled via software.

As far as triumphant debuts are concerned, that's problematic -- but it doesn't say anything about the underlying usefulness of big.LITTLE as a whole. ARM showed us demos of asymmetric configurations in action, and it's clear that chips that implement a GTS can save power compared to those that don't.

big.LITTLE MP (Global Task Scheduling on an asymmetric core implementation. Cumulative energy for a conventional dual-core vs three A7's + two A15's is shown in the center.)

The other fact that's worth pointing out is that while big.LITTLE is an alternative to the kind of frequency and voltage scaling that Intel uses, ARM processors are compatible with DVFS techniques as well. A manufacturer like Qualcomm or Samsung could implement a chip to use a DVFS approach rather than a big.LITTLE option, or could even implement both. Again, this is somewhat dependent on available foundry technology from TSMC or GlobalFoundries, but it's far from impossible.

So where does that leave us? Waiting for the next round of products, on both sides. Intel unquestionably needs Bay Trail to be a major success story; continuing softness in the PC market threatens the company's bottom line and it needs to demonstrate a chip that can compete squarely on ARM's turf. big.LITTLE, meanwhile, only has a few adoptees. That will likely change once the relevant patches are streamed into Android and software support picks up, but this sort of chicken-and-egg scenario is always a slow process.

Samsung's Exynos Octa was supposed to be big.LITTLE's major debut, but all available evidence suggests that the CPU's implementation is broken. That would explain why Samsung's flagship, the Galaxy S4, recently launched with a Qualcomm processor inside the US version, with the much-touted Exynos 5 Octa relegated to the international versions of the phone and the Korean model. Reports indicate that the CCI-400 (Cache Coherent Interconnect) module that makes big.LITTLE possible is disabled on the device and can't be enabled via software.

As far as triumphant debuts are concerned, that's problematic -- but it doesn't say anything about the underlying usefulness of big.LITTLE as a whole. ARM showed us demos of asymmetric configurations in action, and it's clear that chips that implement a GTS can save power compared to those that don't.

big.LITTLE MP (Global Task Scheduling on an asymmetric core implementation. Cumulative energy for a conventional dual-core vs three A7's + two A15's is shown in the center.)

The other fact that's worth pointing out is that while big.LITTLE is an alternative to the kind of frequency and voltage scaling that Intel uses, ARM processors are compatible with DVFS techniques as well. A manufacturer like Qualcomm or Samsung could implement a chip to use a DVFS approach rather than a big.LITTLE option, or could even implement both. Again, this is somewhat dependent on available foundry technology from TSMC or GlobalFoundries, but it's far from impossible.

So where does that leave us? Waiting for the next round of products, on both sides. Intel unquestionably needs Bay Trail to be a major success story; continuing softness in the PC market threatens the company's bottom line and it needs to demonstrate a chip that can compete squarely on ARM's turf. big.LITTLE, meanwhile, only has a few adoptees. That will likely change once the relevant patches are streamed into Android and software support picks up, but this sort of chicken-and-egg scenario is always a slow process.