NVIDIA Responds To GPU PhysX Cheating Allegation

As such we jumped on the phone with not only Roy Taylor, Director of Developer Relations and Brian Burke, Senior PR Manager of NVIDIA but also spent some time talking to and trading emails with Oliver Baltuch, President of Futuremark, as well as Mark Rein, Vice President of Epic Games. The following is a quick-take transcript of our discussions with the various parties involved, as well as relevant commentary from a third party (Rein of Epic) that speaks to the core of the allegations and exactly what NVIDIA is or is not doing with their latest driver release.

Roy Taylor, NVIDIA Corp - Opening comments: NVIDIA acquired Ageia and their PhysX technology simply for the wow factor in games. High-end physics effects are just VERY cool. We're working with game developers now that are using physics in things like Naval Warfare simulations with scenes where firing broadside on a ship you can use PhysX to enable a full explosion of the ship if you hit it right and watch it blow into thousands of pieces. These sort of effects just weren't possible with CPU-based processing, if you wanted reasonable framerates. The reason we bought Aegia was to enable our GPUs to provide these sorts of effects. Ageia's PhysX adoption problems were in general due to a small installed base and developer support. NVIDIA acquiring Ageia solved that problem.

The largest games developer teams in the world are working with PhysX now. The new beta driver we released is just a sign of what’s to come. GPUs are 5 - 20 times faster than CPUs in physics calculations, depending on the calculations you're speaking of.

HotHardware: In terms of driver development and other product optimization efforts, what is NVIDIA's "official" (or unofficial) stance on optimizing for benchmarks?

NVIDIA, Taylor: Legitimate optimization are reasonable and important, only if it improves real game play. Optimizing solely for benchmarks is simply not done at NVIDIA. In terms of 3DMark Vantage, if you’re interested in making a driver change and submitting that, Futuremark's BDP (Bechmark Development Plan) process has strict guidelines you must adhere to. We have followed them to a tee and this new beta driver has not been submitted for consideration.

HotHardware: We recently became aware of the accusation being thrown about in the industry regarding NVIDIA allegedly "cheating" in Futuremark's 3DMark Vantage benchmark via an offload of PhysX-based physics calculations that were previously sent to the CPU that, with the latest 177.39 driver release, now are sent to GPU for PhysX processing. Could you explain exactly what is being done currently in your new set of PhysX-enabled drivers with respect to 3DMark Vantage, the software libraries that may or may not be replaced, and the impact it has on the benchmark?

NVIDIA, Taylor: During the benchmark install, a runtime library is updated to allow the test to run on the GPU and then during the test, it addresses the benchmark DLLs to the GPU instead of the PPU or CPU. Nothing within the benchmark is changed at all. No software libraries or even a line of code changes in the benchmark whatsoever. The only thing that changes is that installer, nothing else. It has been said that the tests results look different on the screen when running with PhysX enabled on the GPU. And of course this is true, just as the screen results look different when you test on a dual-core CPU versus a quad-core. This isn't a graphics test; it's a physics test. 3DMark Vantage specifically scales more complexity into a scene to take advantage of additional physics compute resources, which of course is why it looks so different/better on a test run with PhysX processing on our GPUs. This is by design in the benchmark and if the folks accusing us took the time to run it, they would know that.

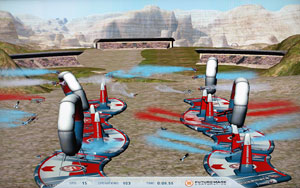

Test Notes: 3DMark Vantage tested in "Performance" preset mode, with and without GPU PhysX enabled, on Core 2 Extreme QX6850 powered test system running Windows Vista Ultimate with the Forceware v177.39 graphics drivers and v8.06.12 PhysX drivers on a pair of GeForce 9800 GTX+ cards running in SLI mode. With GPU PhysX disabled, the overall 3DMark Vantage score is P11720 with a CPU Test 2 score of 14.95 FPS. With GPU PhysX enabled, the overall score jumps to P14298 with a CPU Test 2 score of 127.77 FPS. The screen pictures show the difference in workload output clearly, with the GPU PhysX enabled system processing many more operations. It should also be noted, that even with the GPU PhysX drivers installed, there is an option in the 3DMark Vantage menu to disable the PPU, and this option works with both AGEIA's dedicated PhysX card or NVIDIA GPU PhysX.

Left - CPU Physics Processing, Right - GPU PhysX Processing

Source: 3DMark Vantage

HotHardware: Since your driver seems to be actually changing the system-level workload distribution of 3DMark Vantage, is it your opinion that this is fundamentally changing the benchmark?

NVIDIA, Taylor: The physics test simply switches from the PPU to the GPU. The workload doesn’t change at all but where it is processed in the test machine is. This doesn't change the benchmark, just which processor in your machine handles it.

HotHardware: Do you expect that Futuremark will sanction this driver or another driver with similar optimizations, as a Futuremark 3DMark Vantage approved driver?

NVIDIA, Taylor: It’s fair to say that if the technology supports actual games (which it does), and the benchmark is supposed reflect performance in games, then it would stand to reason that our technology would be adopted in the Futuremark BDP approval process eventually.

HotHardware: What has the adoption rate in the developer community been like, since you've rolled out Ageia technology and IP into your graphics product line?

NVIDIA, Taylor: The adoption rate has been fabulous among the developer community. Our surveys say that about 66% of developers not currently using PhysX are planning to adopt it in the future. Support for PhysX is across multiple platforms as well, including Xbox 360, PS3 and Wii, with moderate to no licensing fees. Put it this way, if you were a developer, limited to doing physics for your game or other application on the CPU, why wouldn't you support it on the GPU, especially if you can do better effects and more of them this way? Furthermore, if developers already use the CPU for PhysX then it will be a simple drop-in to GPU-enable the game.

A word from Futuremark: And what does Futuremark have to say about this? Oliver Baltuch of Futuremark had this to offer with respect to NVIDIA's new technology, 3DMark Vantage and the industry as a whole:

"The driver in question has not been submitted for authorization and is for demo purposes only. NVIDIA has followed the correct rules for driver authorization and the BDP by sending us the 177.35 driver (the same as AMD has sent us the 8.6 driver), both of which are currently undergoing the authorization process in our Quality Assurance area at this moment. Only drivers that have passed WHQL and our driver authorization process have comparable results that will be allowed for use in our ORB database and hall of fame.

Other drivers which have not been submitted will not be commented on. Otherwise, we would have to inspect every Beta and press driver that is released. Outside of this matter, we have been introduced to this technology from NVIDIA and it is truly innovative for future games and game development. As you know, we have an actual game coming as well and it could also make use of PhysX on the GPU.”

Mark Rein, Epic Games chimes in: And finally, to complete the picture a bit more, Mark Rein of Epic Games had this to say..."We couldn't care less about synthetic benchmarks. Real players play real games and in this case there is a real performance gain for a large number of users playing our game. The updated drivers that people are talking about allow properly-equipped Unreal Tournament 3 players users to use some amazing UT3 content that was specifically designed to take advantage of hardware-accelerated physics. So now that content works on high-end Nvidia graphics cards, as opposed to Ageia PPUs. This is a great thing as high-end Nvidia cards number in the millions, or tens of millions, compared to Ageia PPUs which obviously numbered far, far less. So Nvidia has done a cool optimization that allows their customers to get more value and performance out of their graphics cards. How anyone could possibly confuse this for a bad thing is beyond me."

Unreal Tournament 3 with GPU PhysX

So there you have it. In short, NVIDIA was pretty up front with an actual press release on the driver. They insist that nothing in the benchmark has been changed, except for what processor(s) in your system process it and finally, Futuremark doesn't have a problem with the driver (and actually called the technology innovative) or how NVIDIA has worked within the BDP to get their current non-PhysX WHQL driver approved. Furthermore and more importantly, new PhysX-capable drivers will actually offer a better overall game play experience in real games, as you can see from Mr. Rein's rather firm response. So what's all the fuss about? Exactly, move along...there's nothing to see here; except for maybe just better physics effects.